Understanding Voice Cloning Technology

Voice cloning technology has made significant advancements due to the development of artificial intelligence algorithms. It now enables the replication of a person’s voice using only a minimal audio sample. This capability is primarily driven by deep learning neural networks. These networks analyze voice characteristics, speech patterns, and tonal nuances to create a highly accurate reproduction of an individual’s voice. Recent innovations in ai voice cloning regulation news have made this technology more accessible to various users. What regulations do instituions reaserch to deal with voice cloning?

The mechanics behind voice cloning involve two main steps: training and synthesis. During the training phase, existing audio samples are fed into the AI models. These models learn to identify and replicate vocal attributes of the speaker. Following this, the synthesis phase comes into play, where the AI generates speech that closely resembles the original voice. These processes allow for the creation of deepfake voice outputs. As a result, these can be used in voice phishing or vishing scams.

Scammers have taken notice of the rise in voice cloning technology, utilizing it to execute sophisticated phone scams in 2026. For instance, a fake kidnapping scam could involve a criminal using a cloned voice of a family member to induce panic. This technique manipulates the victim into complying with their demands. Understanding how to spot AI-generated voices is crucial for personal safety. Scammers increasingly rely on these techniques to deceive individuals.

The implications of voice cloning on personal security and privacy are profound. As the technology evolves, it is imperative for individuals to remain aware of the risks associated with AI voice cloning. By staying informed about the tools and software that enable this technology, one can better protect themselves and their loved ones from potential threats. For instance, family safety tips should be discussed to ensure vigilance against evolving scams.

The Mechanics of Vishing: How Scammers Operate

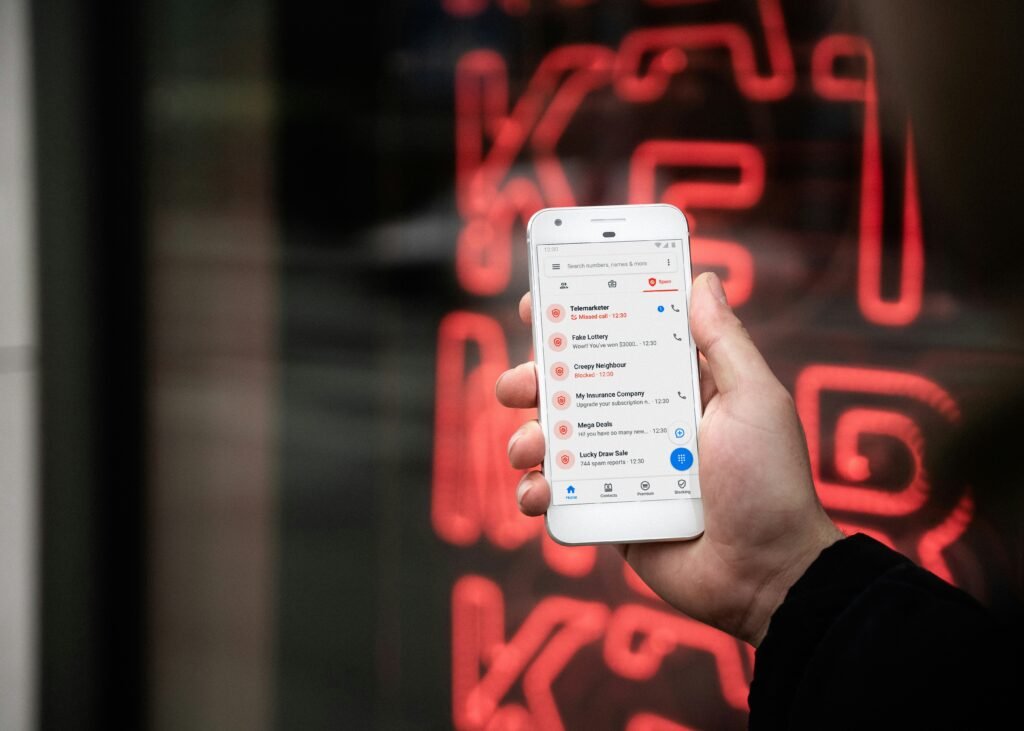

Vishing, or voice phishing, represents a cunning strategy employed by scammers to manipulate their victims through the telephone. By utilizing advanced ai voice cloning technology, scammers can create remarkably realistic audio clips that mimic trusted voices. These deepfake voices are instrumental in establishing a facade of legitimacy. This is often the first step in a successful scam.

Scammers typically initiate contact by calling potential victims. They claim to be representatives from legitimate institutions such as banks, government agencies, or even family members. They leverage psychological tactics, often invoking a sense of urgency or fear to prompt quick decision-making. For instance, a common tactic may involve crafting a fake kidnapping scam scenario. In this scam, the victim believes their loved one is in danger. The urgency created by such a situation often blinds an individual to the nuances of a suspicious call.

The sophistication of these calls has increased dramatically. Many scammers use information gleaned from social media and other public sources to make their impersonations more convincing. This personal data allows them to tailor their narratives effectively. As a result, it is harder for victims to spot the signs of an ongoing ai scam. Additionally, vishing attacks can involve emotional manipulation. Scammers may feign distress or panic, urging victims to act immediately.

Real-world examples have shown that these tactics successfully ensnare unsuspecting individuals. In 2026, several reports emerged about phone scams that leveraged deepfake voice technology. Many scammers caught victims off guard by impersonating trusted acquaintances. This ultimately resulted in significant financial losses.

Recognizing and Responding to Vishing Calls

As technology evolves, so do the tactics employed by scammers. One particularly insidious form of fraud is vishing, or voice phishing. This method has seen a significant uptick, notably with advancements like ai voice cloning and deepfake voice technologies. It enables scammers to mimic legitimate voices convincingly. Identifying these fraudulent calls is crucial for safeguarding oneself and family members.

To recognize a vishing call, remain vigilant for key indicators. Scammers often create a sense of urgency in their voice, pressuring you to act quickly without due consideration. They might claim to be from a trusted organization, such as a bank or government agency, requesting personal or financial information. Familiarize yourself with typical scam scripts. Stay alert for any requests that seem out of place or too aggressive.

Moreover, it’s important to manage the information you share over the phone. Always be cautious about providing sensitive details like Social Security numbers, banking information, or passwords. When you receive a suspicious call, it is advisable to verify the identity of the caller. Hang up and contact the organization directly using a phone number from their official website, rather than the one provided by the caller. This way, you can ensure that you are communicating with an authentic representative.

Communication within families is paramount. Establishing a protocol on how to address potential scams, such as setting a code word for sensitive communications, can enhance family safety. Additionally, sharing family safety tips and encouraging open discussions about ai scams can empower everyone to remain aware of their surroundings. This can improve the collective ability to spot and report potential threats. For example, consider the rising trend of phone scams in 2026.

Voice Cloning Proactive Measures: Protecting Your Family Tonight

In an era where technologies like ai voice cloning and deepfake voice are becoming increasingly sophisticated, families must be proactive. It is imperative for families to adopt proactive measures to safeguard themselves against scams, particularly vishing, or voice phishing. One fundamental step is to listen to ai voice cloning regulation news. This news outlines how to verify unexpected phone calls. For instance, encourage family members to share a secret code that only they would know. This code can be requested during suspicious interactions.

Furthermore, it is essential to have open discussions about recognizing potential ai scams. Families should practice how to spot ai voice misrepresentations in various situations such as fake kidnapping scams or financial phishing attempts. Establishing clear guidelines on what types of personal information can be shared over the phone will also enhance family safety. Teach all members, especially children and elderly relatives, to exercise caution. They should be extra careful when they receive calls that seem urgent or require immediate action.

Regular check-ins serve a dual purpose: they not only strengthen family bonds but also reinforce the importance of security. Setting up a routine where family members update each other on their activities can increase awareness of potential vishing attempts. This practice will empower each member to report any suspicious interactions. As a result, a buddy system is created. This system enhances everyone’s sense of security. Also, always listen to new ai voice cloning regulation news to get the latest information about ai voice cloning.

Finally, allocating time for educational discussions about voice phishing and unscrupulous phone scams, particularly the evolving tactics being employed by scammers in 2026, ensures that all family members remain vigilant. By fostering an environment where conversations about safety are commonplace, you equip everyone with the knowledge necessary to navigate potential threats effectively. Protecting your family against the growing risks associated with ai voice cloning and vishing requires unity, preparation, and continuous communication.